Data Agents

for Complex

Data Analysis

Build autonomous AI agents that decompose complex analytical questions, orchestrate multi-step reasoning over heterogeneous data sources, and deliver accurate answers.

News & Updates

Latest announcements and important updates. All dates follow AoE (UTC-12).

Competition Rules Page Released

The official Competition Rules page is now available on the website, detailing dataset format, submission process, evaluation procedures, and related constraints. Visit dataagent.top/rules for complete details.

View RulesStarter Kit Released

The official KDD Cup 2026 starter kit is now available on GitHub. It includes a ReAct baseline agent, dataset loader, and CLI tooling to help participants get started quickly.

View on GitHubRegistration Now Open

Team registration is now open. The Team Leader submits initial registration, and all members will receive a verification email within 24 hours to complete the process.

Official Community Channels Launched

Our official WeChat channel (数据智能与分析实验室 DIAL) and Discord server (KDD Cup 2026 | DataAgents) are now live. Join to get real-time updates and connect with other participants.

Phase 1 Demo Dataset README Updated

The README in the Phase 1 demo dataset has been updated to help participants better understand the dataset structure.

Phase 1 Demo Dataset Released

The Phase 1 demo dataset is now available. The official download link has been added to the DataAgent-Bench section.

Official Website Launched

The KDD Cup 2026 competition website is now live, with competition details, schedule, and benchmark information now available online.

Why Data Agents?

Traditional Data+AI systems have made significant strides in optimizing specific tasks, but they still rely heavily on human experts to orchestrate the end-to-end pipeline. This manual orchestration is a major bottleneck, limiting the scalability and adaptability of data analysis.

We define a Data Agent as a holistic architecture designed to orchestrate Data+AI ecosystems by tackling data-related tasks through integrated knowledge comprehension, reasoning, and planning capabilities. This competition challenges you to build truly autonomous data analysis systems that go far beyond single-shot question answering.

Decompose & Plan

Break down high-level analytical questions into multi-step, executable plans autonomously.

Tool Selection & Invocation

Select and invoke appropriate tools — Python scripts, SQL queries, API calls — at each reasoning step.

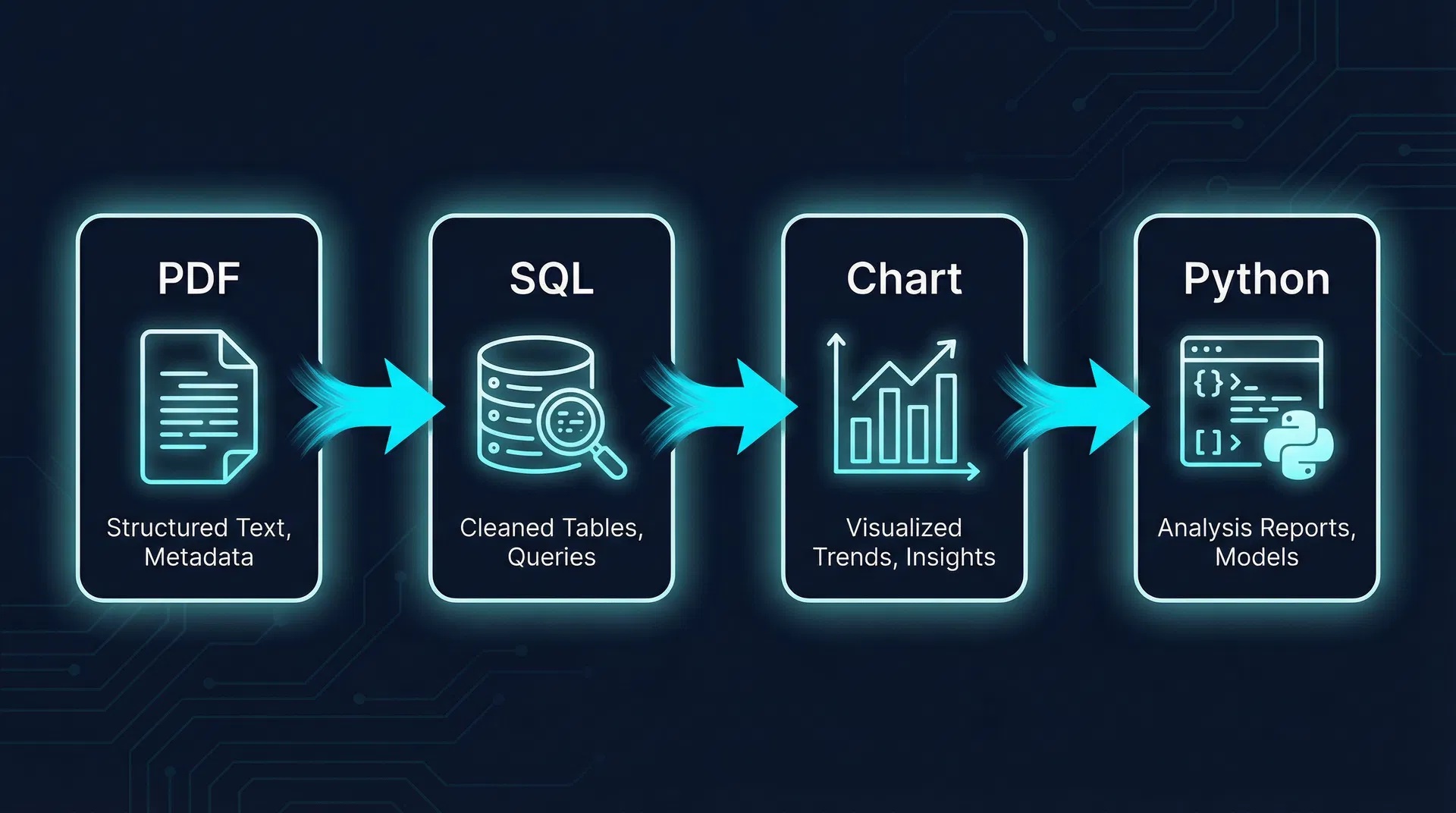

Heterogeneous Data Reasoning

Reason over structured tables, unstructured documents, charts, and multi-modal data sources.

Result Synthesis

Synthesize intermediate results across multiple steps to arrive at a final, accurate answer.

Broader Impact

Robust Data Agents have the potential to revolutionize how we interact with data. They can democratize data science by enabling non-experts to perform sophisticated analyses through natural language. For enterprises, they can automate the work of data analysts and database administrators, leading to massive efficiency gains. This competition will stimulate new research in agent architectures, planning algorithms, tool use, and self-reflection for AI systems.

DataAgent-Bench

Each task in DataAgent-Bench presents a self-contained data analysis challenge. The agent receives a heterogeneous data package and a high-level natural language question, and must autonomously orchestrate a complex reasoning process to produce the final answer.

Input: Data Package

Heterogeneous, multi-modal data sources

Non-Linear Reasoning Topology

Unlike simple linear chains, real-world data analysis often requires branching (parallel sub-queries), loops (iterative refinement), and convergence (merging results from multiple paths). DataAgent-Bench captures this complexity with DAG-structured reasoning graphs.

Reasoning Topology Patterns

Each step depends on the previous step's output. Errors propagate downstream.

Parallel sub-queries across different data sources, then merge results.

Iterative refinement where the agent revisits and corrects intermediate results.

Example Task

"Our Q3 regional market analysis report identifies the region with the strongest year-over-year growth. For that region, pull the total actual sales revenue of all Electronics products from our sales database. Then, compare this figure against the quarterly sales target shown in the performance dashboard chart. Report the percentage difference."

Expected Reasoning Graph

This example demonstrates a branching pattern: after identifying the target region, the agent spawns two parallel sub-tasks (database query and chart analysis), then merges results for the final computation.

Read PDF report → identify top-growth region

Query sales WHERE region = "East Asia" AND category = "Electronics"

Read performance dashboard chart → extract Q3 target

Compute percentage difference: (4,200,000 - 3,800,000) / 3,800,000

Resources

The starter kit works across both phases. Demo datasets are released per phase alongside difficulty information and download links.

Starter Kit

Phase 1 & 2HKUSTDial / kddcup2026-data-agents-starter-kit

Phase 1 Demo Dataset

A preview package for the Phase 1 setting. The same package is mirrored on Google Drive and Baidu Netdisk—use whichever is faster for you.

| Level | Modalities | Core Challenge | Document Scale |

|---|---|---|---|

| Easy | Structured files such as JSON/CSV + knowledge documents | Code generation for data analysis workflows, such as Python execution | Short context |

| Medium | Structured files such as JSON/CSV + databases + knowledge documents | Text-to-SQL and multi-source data analysis | Moderate context |

| Hard | Structured files such as JSON/CSV + databases + data documents + knowledge documents | Reasoning over unstructured data documents | ~10K-128K tokens |

| Extreme | Same modality combination as Hard | Context engineering and memory under ultra-long document inputs | >128K tokens |

Phase 2 Demo Dataset

Phase 2 demo dataset details, difficulty classification, and download access will be announced before Phase 2 begins.

Phase 2 difficulty definitions and demo dataset details will be released before Phase 2 starts.

Tracks

The competition has two phases. Phase 1 uses a single main track, and Phase 2 opens multiple subtracks for qualified teams with different goals and evaluation styles.

Phase 1

All registered teams compete on one public leaderboard with automated evaluation.

Phase 2

The final stage introduces two subtracks, separating benchmark ranking from system experience and product design.

Phase 2 Subtracks

Qualified teams can continue in Phase 2 through two different tracks, depending on their qualification status and final competition rules.

Leaderboard Subtrack

This subtrack keeps the core competition format, but uses a more challenging Phase 2 benchmark with new modalities such as data images and data videos. Teams are ranked automatically by answer accuracy.

Creative Subtrack

Teams build mature, easy-to-use, interface-friendly data agent systems with strong interaction design and transparent decision processes.

How qualification works

Teams that place above the Phase 1 qualification cutoff will advance to Phase 2. All qualified teams may freely choose either the leaderboard subtrack or the creative subtrack.

In addition, to broaden Phase 2 participation and recognize promising systems that fall just short of the main qualification cutoff, selected teams near the Phase 1 cutoff will automatically advance to the creative subtrack. The exact cutoff percentages will be announced after registration scale is finalized, so participants can understand the final advancement thresholds before Phase 2 begins.

Scoring & Evaluation

The leaderboard track uses a binary column-matching accuracy score. A prediction receives 1 only when it completely and accurately covers all gold columns; otherwise it receives 0. Creative-track submissions are reviewed jointly by sponsors and the organizing committee.

Leaderboard Scoring Rule

The automatic metric checks whether every gold column is fully and correctly covered by the prediction. Matching is done column by column, and each column is compared as an unordered vector of values only, without using column names.

Column-As-Vector Matching

Each answer column is treated as one unordered column-value vector. Column names are not part of the match.

Score 1

A prediction is counted as correct only when it completely and accurately contains every gold column.

Score 0

If any gold column is missing or mismatched, the prediction is scored as incorrect.

Example

The prediction contains columns A, B, C.

The gold answer requires columns B, C.

Result: Score = 1

Even though the prediction contains an extra column A, it still receives full credit because it completely and accurately contains all gold columns B and C.

Extra predicted columns do not invalidate the answer, but any missing or mismatched gold column makes the score 0.

Column matching uses only the values inside each column vector. Column names are ignored during scoring.

Evaluation Modes

Leaderboard Ranking

Leaderboard submissions are scored automatically with the binary column-matching metric for immediate ranking feedback.

Creative Track Assessment

Creative-track systems are reviewed jointly by sponsors and the organizing committee, with emphasis on interaction design, transparency, innovation, and overall usability.

Competition Timeline

The official schedule runs from March 15 to August 9, 2026, covering registration, Phase 1, qualification review, Phase 2 with two subtracks, final verification, and the KDD announcement. All dates are interpreted as end-of-day AoE (UTC-12) unless otherwise noted.

Competition Launch & Demo Dataset Release

The competition opens and the demo dataset is published for participants.

Registration

Teams register and finalize their participant roster during the official registration window.

Phase 1 Competition

Registered teams compete in Phase 1 on the public leaderboard.

Leaderboard Freeze & Qualification Review

The leaderboard is frozen, submissions are verified, teams above the Phase 1 qualification cutoff advance to Phase 2 and may choose either subtrack, while selected teams near the cutoff are automatically admitted to the creative subtrack.

Phase 2 Competition

Qualified teams enter Phase 2 and compete in either the leaderboard subtrack, which uses more challenging data and new modalities such as data images and data videos, or the creative subtrack for mature, user-friendly data agent systems.

Final Freeze & Award Review

The final leaderboard is frozen, manual checks are completed, and award-winning teams are confirmed.

Award Notification

Winning teams receive official notification of the final results.

KDD 2026 Announcement

Formal announcement of winners at KDD 2026.

How to Register

Registration is a four-step process. The team leader registers first, then all members complete identity verification individually.

Initial Registration

Team Leader Only

The Team Leader submits the team's basic information via the registration form.

Registration FormGet Verification Code

All Members

Each team member will receive an email from [email protected] with a unique verification code within 24 hours. Previously registered email addresses will be rejected.

Sign Consent Form

All Members

Every member will receive a second email containing a link to the Informed Consent Form. Each member must complete the form and enter their personal verification code to validate their application.

Final Confirmation

You're In

Once verified, each member will receive a "Registration Confirmed" email. You are now officially enrolled!

Prizes

Prize allocation is defined separately for the leaderboard track and the creative track. All prizes are paid in Chinese Yuan (CNY); USD figures are estimates based on an approximate exchange rate.

Leaderboard Track

Main benchmark ranking awards

Creative Track

Product and system design awards

Awards in the creative track are subject to final committee decision.

* Prizes are disbursed in Chinese Yuan (CNY) by the sponsoring organization. USD amounts shown above are estimates based on an approximate exchange rate of 1 USD ≈ 6.91 CNY at the time of publication. The actual CNY amount is fixed; the final USD equivalent will vary with the prevailing exchange rate at the time of payment. All prize amounts listed above are before tax. Winners are responsible for any taxes, withholdings, or reporting obligations arising from accepting a prize, as required by applicable law.

Beyond Prizes

KDD Cup Workshop Presentation

Winning teams will have the opportunity to present their solutions at the KDD Cup Workshop at KDD 2026, a dedicated half-day session providing significant visibility for their work to the broader data mining and AI community.

Community Recognition

Top-performing teams will be recognized at the formal KDD 2026 Winners Announcement ceremony, gaining visibility among leading researchers and practitioners in the field.

Organizing Committee

The competition is coordinated by a set of general chairs and several committee chairs who lead registration, publicity, data, and evaluation.

General Chairs

These four members oversee the competition as a whole and coordinate the overall direction.

Yuyu Luo

Primary ContactGeneral ChairAssistant Professor

HKUST (Guangzhou) & HKUST

Research at the intersection of Data and AI, focusing on Data Agents and Data-centric AI. 50+ publications in top-tier DB and AI venues (SIGMOD, VLDB, KDD, ICML, NeurIPS, ICLR). Best-of-SIGMOD 2023 Papers recipient. Co-organized the LLM+Vector Data Workshop at ICDE 2026, the Agentic Data System Workshop at VLDB 2026, and presented Data Agent tutorials at SIGMOD and VLDB.

Homepage

Guoliang Li

General ChairProfessor

Tsinghua University

ACM Fellow and IEEE Fellow. Research focuses on learning-based databases and data-centric AI. VLDB 2017 Early Research Contribution Award recipient. Served as SIGMOD 2021 General Co-Chair and ICDE 2027 PC Co-Chair.

Homepage

Nan Tang

General ChairAssociate Professor

HKUST (Guangzhou) & HKUST

ACM Distinguished Member. Research interests include AI4DB and data-centric AI. Recipient of the VLDB 2010 Best Paper Award and the SIGMOD 2024 Research Highlight Award. Co-organized the KDD Cup 2024 CRAG Challenge.

Homepage

Boyan Li

Primary ContactGeneral ChairPhD Student

HKUST (Guangzhou)

Research focuses on Text-to-SQL and Data Agents. Published 14 papers in top venues including KDD, ICML, NeurIPS, and VLDB.

HomepageCommittee Chairs

Different operational areas are led by dedicated chairs, with some members covering multiple responsibilities.

Zhengxuan Zhang

PhD Student

HKUST (Guangzhou)

Research focuses on Document AI, Information Extraction, and Database Systems, with several papers published in top-tier conferences and journals.

Homepage

Yupeng Xie

PhD Student

HKUST (Guangzhou)

Research focuses on Data Agents and Data Visualization. Published papers in top venues including VLDB, SIGMOD, ICLR, and IEEE VIS.

Homepage

Zhuowen Liang

PhD Student

HKUST (Guangzhou)

Research focuses on Information Extraction and Document AI. Published several papers in top venues including ICLR and MM.

Homepage

Yuan Li

PhD Student

Tsinghua University

Research focuses on Unstructured Data Analysis and Data Agents. Published papers in VLDB.

Jiayi Zhang

PhD Student

HKUST (Guangzhou)

Research focuses on Language Agents. Published papers in top venues including ICLR, ICML, and NeurIPS.

Homepage

Xiaotian Lin

PhD Student

HKUST (Guangzhou)

Research focuses on Data-centric AI and Data Agents. Published papers in top venues including VLDB, ICLR, and ACL.

Join the Community

Connect with other participants, get the latest updates, and reach the organizing team through our official channels.

WeChat Official Account

数据智能与分析实验室 DIAL

Follow and reply KDD进群 for the WeChat group QR code, or KDD大赛 for competition FAQ and resources.

Discord

Join our Discord server to discuss with participants worldwide and get real-time updates from the organizing team.

KDD Cup 2026 | DataAgents →